On this web page, you will find the results obtained from our survey on the use of materials science, quantum chemistry, and solid-state physics codes.

The initial announcement of the survey was on August 22nd, 2022, through the Psi-k mailing list. The data collection for this version of the results was concluded on March 6th, 2023. Although the survey is still open (link here) for new participants, the current report represents the findings up until that date. We will continue to update the results periodically as more responses are received.

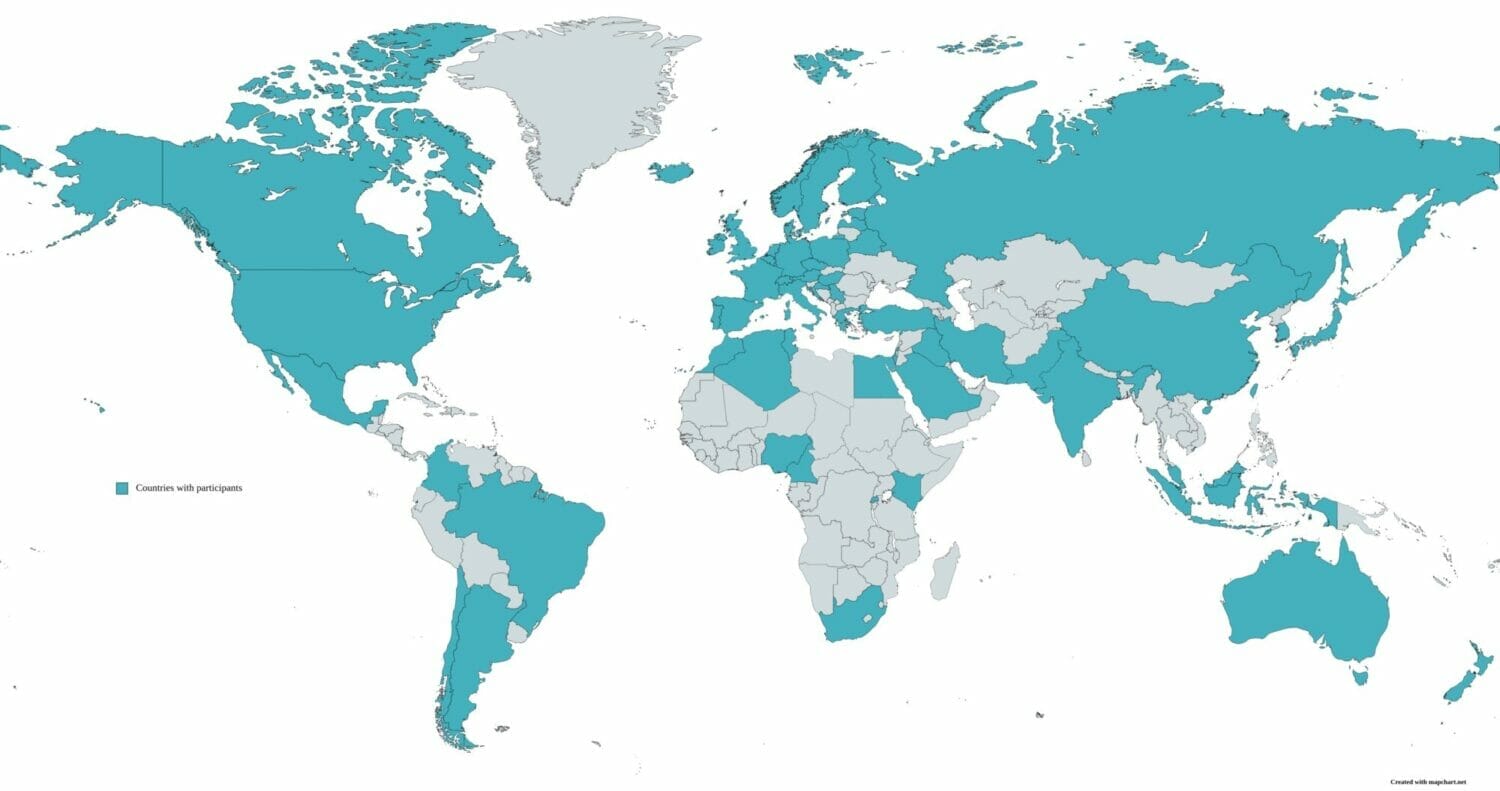

The survey's outreach has been very satisfying, with over 450 participants worldwide representing various communities. This diverse participation enables us to identify several trends, which are elaborated upon below. We have also analyzed the responses from both academic and industrial participants to provide specific insights for each group.

01 Reach of the survey

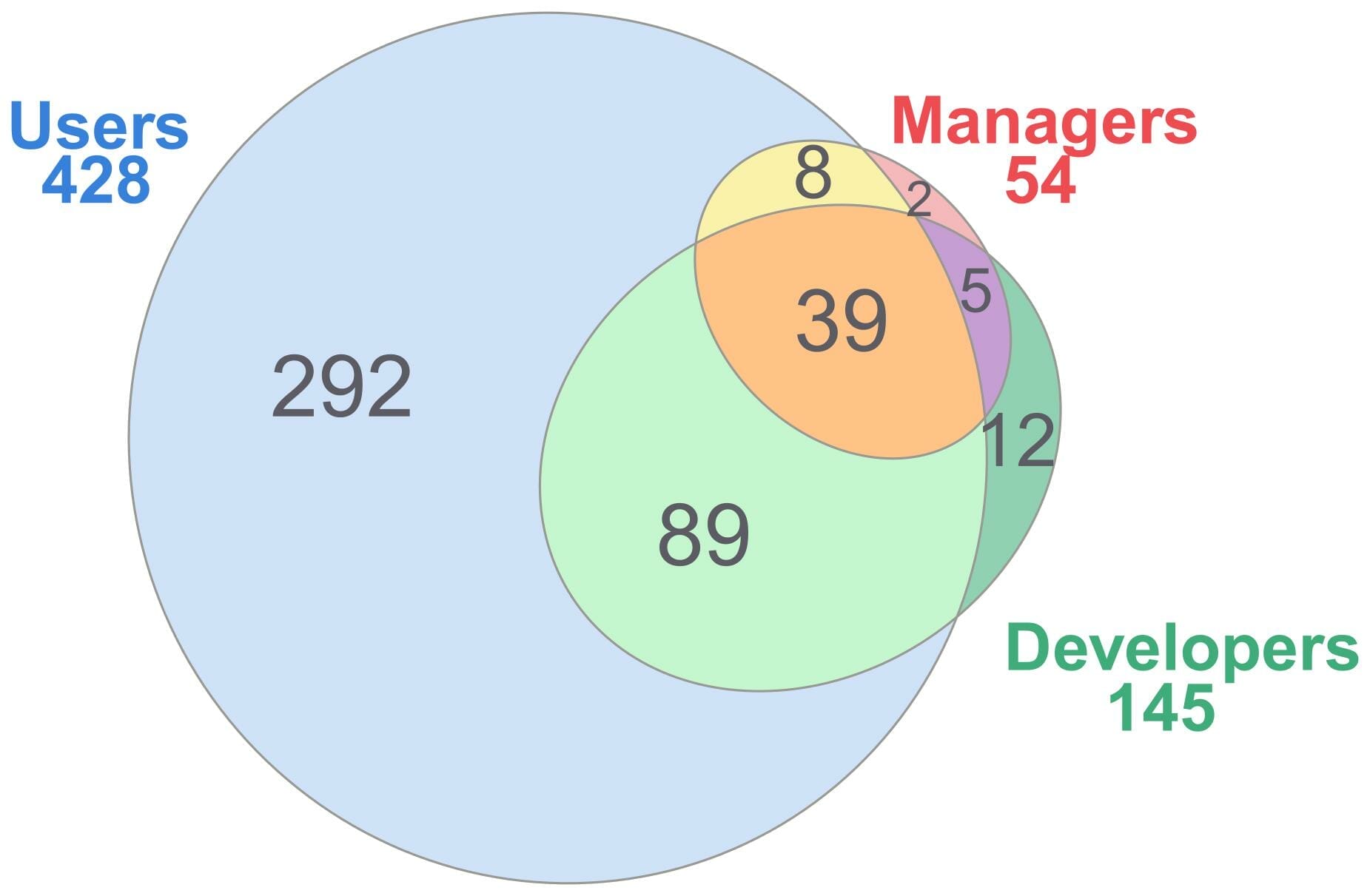

We have collected 450 replies to the survey (as of Oct 7th, 2022) coming from more than 50 countries. Most of those are at least users, and roughly a third are developers, as represented below.

As expected, most of the responses (92%) come from the academic world. Among these, the professors, post-doctoral researchers and PhD students are almost equally represented.

02 Studied systems

More than 70% of participants study (at least) 3D systems, typically including 11-100 atoms. Most reseachers study multiple types of systems (average of 3 among those suggested). The number of atoms included in simulations is reaching higher limits than what was previously attainable, with more than 40% of participants studying 100-500 atoms systems.

03 Electronic-structure methods

The vast majority (97%) of participants use at least DFT. Hybrids are used by a third of respundants, and other electronic-structure methods such as Hartree-Fock or GW are used by less than 18% of participants. The fraction of industrial researchers using hybrids, Hartree-Fock, CC or MP methods is larger than those using DFT or GW. This can be explained in part from the larger fraction of industrial researchers focusing on molecules (see previous figure).

As can be expected from the large use of DFT together with the larger focus on 3D systems, plane waves are used by a large fraction of researchers (75%). Next, gaussian orbitals are used by a third of participants. A similar analysis as that for the electronic-structure methods holds for the larger fraction of industrial researchers using gaussian orbitals, which are typically used to study molecules.

Finally, the core-electron descriptions are slightly more evenly distributed. 54 and 49% of participants use PAW or norm-conserving pseudopotentials, and 36 and 29% use all-electron methods and ultrasoft pseudopotentials. We note that to the contrary of academic researchers (who mainly use PAW), industry mainly uses norm-conserving pseudopotentials and all-electron computations.

04 Machines used for computations

Most of the respundants indicated using a local cluster (66%), a Tier-1 HPC cluster (62%), or their personal and/or professional computer (55%). Only a third has access to a Tier-0 HPC cluster, and a minority uses cloud services.

Compared to acadamic researchers, those from industry use less external clusters, but are more prompt to use cloud services. The use of personal and/or professional computers and local clusters is similar for both academic and industrial worlds. We note that researchers performing high-throughput computing are not significantly using a different type of machine than those running high-performance computations. One can assume that most researchers run simulations on whichever machine they have access to, no matter which type of computing they intend to perform.

05 Parallelization

MPI parallelization is mostly used, with 58% of participants always using it and only 5% never using it. The use of OpenMP is roughly distributed, with 60% of participants always or often using it. On the other hand, GPU acceleration is not yet used by a majority of participants (58% never use it, 24% rarely use it).

Most participants using OpenMP also use MPI and rarely GPUs. Those using MPI rarely use GPUs and use OpenMP in an evenly distributed manner. Finally, participants using GPUs typically do not use another type of parallelization.

06 General aspects of simulation codes

Academic and industrial researchers find different aspects of the codes they are using important. First, industrial participants find the price of the code to be less important than academic researchers. Industrial researchers also pay more attention to the speed, scalability and user friendliness of a code. The accuracy and ease of installation are of same importance in both industry and academia: accuracy is very important, and the ease of installation matters less than other aspects (the importance of pre/post-processing tools is similar).

07 Preferred build system

It is clear from the figure below that users prefer make to build their code: 56% chose make, while cmake, the next most preferred build system, represents only 13% of users and is closely followed by precompiled executables (12%). Developers also prefer make (55%), but cmake is also well-liked (22%).

08 Output formats

There does not seem to be a strong incentive to format the main output files: simple plain text is the preferred option by far. For the secondary output files, however, a formatted binary file is preferred. Note that these options are already those applied by many codes. This means that either there is no need for formatted text files, or the force of habit is too strong compared to the advantages of formatted text files for most participants.

09 Most used and developed codes and features

The participants to this survey mainly use VASP, followed by Quantum ESPRESSO and ABINIT. Except for ABINIT, DMol3 and NWChem, the order presented here is in a relatively good agreement with the ranking of the most cited codes (in 2021).

Overall, 64 simulations codes are represented by the participants, even though 46 codes are represented by fewer than 5 participants. Such a large number of represented codes still allows to draw interesting trends and conclusions.

As could be expected, the most used features are also the most basic ones: the ground-state electronic properties (band structure, density of states,...) and geometry optimization. These steps are necessary in most cases before going further with additional features. Beyond these, many features show similar interest: DFT+U, magnetism, hybrid functionals, DFPT and vibrational properties (in case of molecules), optical properties, nudged elastic bands, and ab initio molecular dynamics. Some of these may be considered basic features today (DFT+U, magnetism, vibrational properties) and are present in many codes, while others (nudged elastic bands, ab initio molecular dynamics, DFPT) are developed in only some codes, sometimes specifically designed for those (e.g., CP2K for ab initio molecular dynamics).

Note that only features mentioned a minimum of 15 times are represented in the figure below. This represents 23 features, out of 80 in total, demonstrating the diversity of possible features.

10 Feedback from coordinators

Two thirds of coordinators feel a strong incentive to extend the existing codes to GPU acceleration. The main reasons are that the trend in HPC centres is moving towards GPUs, and that it could be a source of speedup for computations. This last point seems controversial, with many of the coordinators who are not in favor of moving towards GPU acceleration pointing the fact that the speedup is not large enough to justify the effort. Except a few, most coordinators also state the fact that developing with GPU acceleration is not an easy task, that it is time consuming and requires manpower that they might not have (see figure below: most groups are made of less than 5 developers). Some also point that GPU acceleration is not suitable for some tasks that need a lot of memory or that it reduces the readability of the code.

Concerning the greatest challenges to come, many coordinators think about being able to exploit new hardware and having a portable code across different heterogeneous architectures (including cloud-based ones) or compilers. The transition to exascale computing is expected to be difficult. Many also find that the codes are too complex, meaning that there is a high entry barrier and that it is hard to find developers. Relying on a more modern programming language and a modular implementation would attract more developers, as well as working as a community to find better solutions and join efforts (e.g., through the developement and use of libraries). Funding is also a recurrent problem, in particular for long-term maintenance of a code. Machine learning is also mentioned a few times as a way to accelerate computations. Finally, improving the accuracy of first-principles methods is also of great importance.

11 Feedback from developers

In the following, we will review the general feedback of developers across all codes. A similar exhaustive analysis for each code is possible but would be lengthy and difficult to apprehend. It could be interesting for specific code developers though, who can contact us for more details.

The main reason for the developers to start is that they needed a feature that was unavailable in the code they were using. One could imagine that the typical scenario is a researcher who started to learn about computational quantum chemistry or solid-state physics by using a given code, carries a project with said code and needs to compute something that is not available within this code. During their post-doc or PhD, they then start to implement this new feature. Coming back to one of the difficulties found by coordinators, this also explains the difficulty of having long-term maintenance for all features of a code: once the researcher's project is done, if there is nobody working on that part anymore, the maintenance can be low (or inexistent). Interestingly, the fact that the researcher already knew the programming language also seems important: maybe some researchers would like a new features, but do not know the programming language and do not have the time/dare to start learning and contributing themselves.

Overall, adding tests and collaborating with other developers is considered to be relatively easy by most developers. This is most likely due to collaborative platforms such as Github or Gitlab, that are used by many codes. Interfacing the codes with external libraries is considered a bit more difficult, but not as much as starting to implement a new feature or grasping the structure and logic of the code. The fact that these last two aspects are the hardest can be expected, since they demand efforts specific to each code, while collaborating or developing tests is usually done in a similar way in other projects and new developers of a code might already have experience in those. Additionally, the test suite is usually considered as satisfactory. The testing of the performances of the codes seems less complete than the testing of the functionalities and numerical results.

Fortran is both the most used and the less liked programming language. This is most likely due to the fact that many codes were developed in the 80s and 90s, and Fortran was the sensible choice at that time. Since then, many new programming languages have emerged, but re-writing a whole code containing hundreds of thousands of lines (or even millions) is a task that has been avoided in most cases. The languages that would be preferred by unsatisfied developers would be Python (together with C/C++ libraries), mainly for its high level, C++ for its performances, Julia because it is modern and efficient, or Rust for similar reasons.

12 Feedback from users

The main reason to primarily start using a code are that it has unique or rare features (corresponding to what researchers want to compute). If multiple codes are available for a given feature, the performances (speed, accuracy) come next, close to the experience gathered by colleagues: if many researchers from the group already use a given code, this code will obviously be more easily used. The fact that it is easy to use is also important: for a given feature, if many researchers from the same lab use a given code but another one is easier to use for this purpose, this will also have to be included in the decision process.

Once a researcher is used to a code and has extensive experience at using it, this becomes the main reason to keep using it. We suspect this is true even if it is clear for the researcher that another code might be more suited for the purpose (to some extent). This is also reflected in the fact that using a code once we are used to it is one of the easiest aspects of the codes, and starting to use a code is one of the hardest together with installing and compiling it (see figure below). Finally, only a few researchers do not have a choice with respect to the code they are using. In most of these cases, the code is imposed by the head of the group for all their researchers, or simply during teaching for students who then start a PhD.

Unfortunately, the open-source/free aspect of a code was not suggested to all participants during the survey. Yet, a few researchers indicate this to be the most important reason for them to use a code. This important aspect is therefore most likely not sufficiently well represented in the collected data but should be considered for future developments.

The performances of the codes are generally satisfying to their users. The tutorials, documentation, and support come next, with a slightly lower satisfaction overall. The pre/post-processing tools are slightly less satisfactory, but not significantly. The recommendation score of users for the code they are using is always between 3 and 5 (with two exceptions), more precisely of 4.38 on average, with a standard deviation of 0.7.

The availability of GPU acceleration together with the codes is still far from obvious to all users. Indeed, for many codes, some users think it is available and some think it is not, in various proportions. In most cases, many users also simply do not know if it is available or not. This situation is most likely due to the status of such developments: GPU acceleration is usually not available for many features of the codes. Those who know it is available and use it are usually happy with the performances. For those who do not use it, it is most of the time due to the fact that they do not have access to GPUs, that it is not available for the features they are using, or that they do not see the advantage.